At this point, I was beginning to suspect that it was going to make sense to use PySpark instead of Pandas for the data processing. However, we still ran into problems when trying to convert back into a spark array to write back out to the file system around the types of the various columns. Without this setting, each row must be individually pickled (serialized) and converted to Pandas which is extremely inefficient. This allows the data to be converted into Arrow format, which can then be converted via zero allocation pyarrow to convert into a Pandas dataframe. This setting enables the use of the Apache Arrow data format. In order to get a pandas dataframe you can use: pandas_df = df.toPandas()Īlongside the setting: (".enabled", "true") options(header='true', inferSchema='true', though the pandas read function does work in Databricks, we found that it does not work correctly with external storage. The file could then be loaded into a Spark dataframe via: df = sqlContext ("fs.azure.createRemoteFileSystemDuringInitialization", "false") ("fs.azure.createRemoteFileSystemDuringInitialization", "true")ĭbutils.fs.ls("abfss://.net/") In the notebook, the secret can then be used to connect to ADLS using the following configuration: ("fs.", (scope = "", key = "DataLakeStore")) Initial-manage-principal must be set to "users" when using a non-premium-tier account as this is the only allowed scope for secrets.ĭatabricks secrets put -scope -key DataLakeStore Create a scope for that secret using:ĭatabricks secrets create-scope -scope -initial-manage-principal users To create the secret use the command databricks configure -token, and enter your personal access token when prompted. Create a "secret" in the Databricks account.Install the Databricks CLI using pip with the command pip install databricks-cli.Create a personal access token in the "Users" section in Databricks.This was done using a secret which can be created using the CLI as follows: However, the data we were using resided in Azure Data Lake Gen2, so we needed to connect the cluster to ADLS. This is installed by default on Databricks clusters, and can be run in all Databricks notebooks as you would in Jupyter. As I've mentioned, the existing ETL notebook we were using was using the Pandas library. You can either upload existing Jupyter notebooks and run them via Databricks, or start from scratch. Once the Databricks connection is set up, you will be able to access any Notebooks in the workspace of that account and run these as a pipeline activity on your specified cluster. However, you pay for the amount of time that a cluster is running, so leaving an interactive cluster running between jobs will incur a cost. However, if you use an interactive cluster with a very short auto-shut-down time, then the same one can be reused for each notebook and then shut down when the pipeline ends. Using job clusters, one would be spun up for each notebook. This is also an excellent option if you are running multiple notebooks within the same pipeline. These can be configured to shut down after a certain time of inactivity. An interactive cluster is a pre-existing cluster. Therefore, if performance is a concern it may be better to use an interactive cluster. It should be noted that cluster spin up times are not insignificant - we measured them at around 4 minutes. If you choose job cluster, a new cluster will be spun up for each time you use the connection (i.e. Here you choose whether you want to use a job cluster or an existing interactive cluster. By choosing compute, and then Databricks, you are taken through to this screen: To start with, you create a new connection in ADF.

This means that you can build up data processes and models using a language you feel comfortable with. NET!), though only Scala, Python and R are currently built into Notebooks.

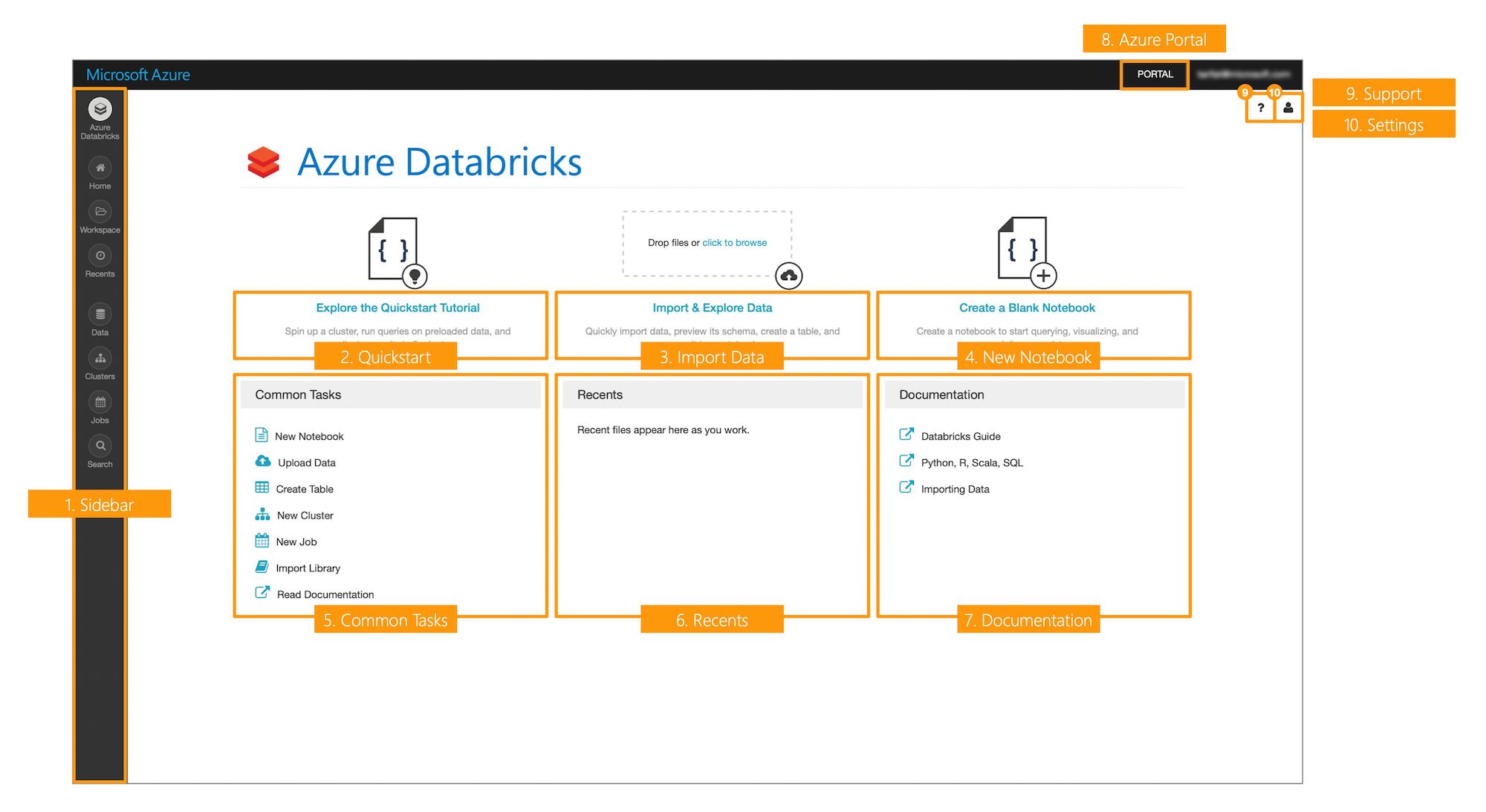

It allows you to run data analysis workloads, and can be accessed via many APIs (Scala, Java, Python, R, SQL, and now. Databricks is built on Spark, which is a "unified analytics engine for big data and machine learning". This notebook could then be run as an activity in a ADF pipeline, and combined with Mapping Data Flows to build up a complex ETL process which can be run via ADF. As part of the same project, we also ported some of an existing ETL Jupyter notebook, written using the Python Pandas library, into a Databricks Notebook. I recently wrote a blog on using ADF Mapping Data Flow for data manipulation. By Carmel Eve Software Engineer I 10th May 2019

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed